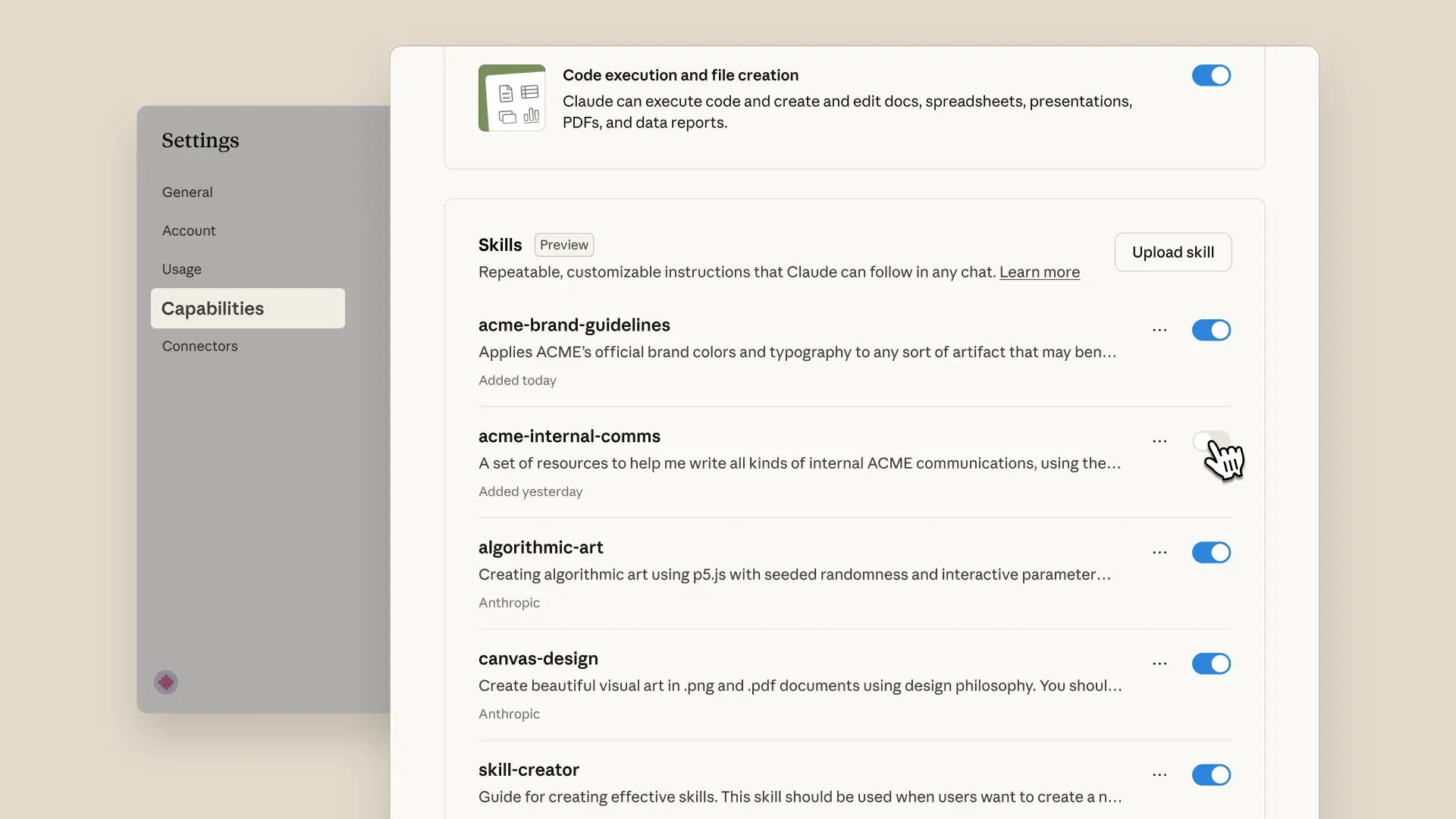

Claude will generate the skill: instructions, called connectors and computer-use moves, kill-switch rules baked in, definition of done. If you built in chat, the skill auto-installs. If you built in Cowork, install it now under Settings -> Capabilities -> Skills -> Customize.

Paste in the kill-switch template (relocated from Part 3) so the generated Skill carries the soft guardrails from the start.

Kill-switch template — paste into the Skill's instructionsIf any of the following are true, STOP immediately and notify me:

- The title or content contains [DRAFT], [TEST], or [HOLD].

- The data source returns zero rows or a 404 / 5xx error.

- The action would touch a [list of high-stakes accounts or contacts].

- Any required input is missing or null.

- You are not certain you understood my instruction. Ask one

clarifying question instead of guessing.

Be literal. The more literal the better.

Then run the Skill once. Sandbox-safe. Watch Claude show its work. If anything looks off, hit the platform-level Stop button (the hard kill switch) and add a literal instruction to the Skill. Be literal. This is the back-10% review: not editing the output, but improving the Skill.

Feedback into the skill, not the output

Spend your time making the skill better, not the deliverable. Most people back-and-forth-edit every output. Put that feedback into the skill.

Schedule this with /schedule once it works.

You'll know you've got it whenthe Skill is installed, the kill-switch lines are pasted in, and the Skill has produced one output that satisfies your Definition of Done (or you've added one new instruction so the next run will).